The use of Artificial Intelligence (AI) in health research and innovation is becoming increasingly commonplace, ranging from studies to detect disease before symptoms arise, to improving patient care by tracking symptoms. However, working with sensitive patient health data presents a critical challenge for researchers, innovators and the health sector. Machine learning and AI models learn by analysing data and identifying patterns within it and, in some cases, they may retain sensitive information from the data they are trained on. This raises the question about how organisations can safely use AI models without disclosing patient information later on.

Governments and regulators have recognised this challenge. Across the UK and internationally there is growing focus on how AI can be developed and deployed safely when using sensitive health data, with new regulatory approaches and policy discussions emphasising the need for strong governance, transparency and human oversight.

Secure Data Environments (SDEs) or Trusted Research Environments (TREs) can help address this challenge by providing safe spaces where innovative AI models can be developed and tested safely, ethically and responsibly.

What are TREs and SDEs?

A TRE is a Trusted Research Environment. Also known as ‘Data Safe Havens’, TREs are highly secure computing environments that provide remote access to health data for approved researchers to use in research that can save and improve lives.

In 2023, NHS England established regional Secure Data Environments (SDE) across the country, specifically to manage secure access to NHS patient data for research and analysis. Like TREs, SDEs are data storage and access platforms, which uphold the highest standards of privacy and security of sensitive health data.

As health research using AI advances, the teams developing SDEs need to implement tools and governance processes to ensure that machine learning models and research outputs can be assessed safely before leaving the environment.

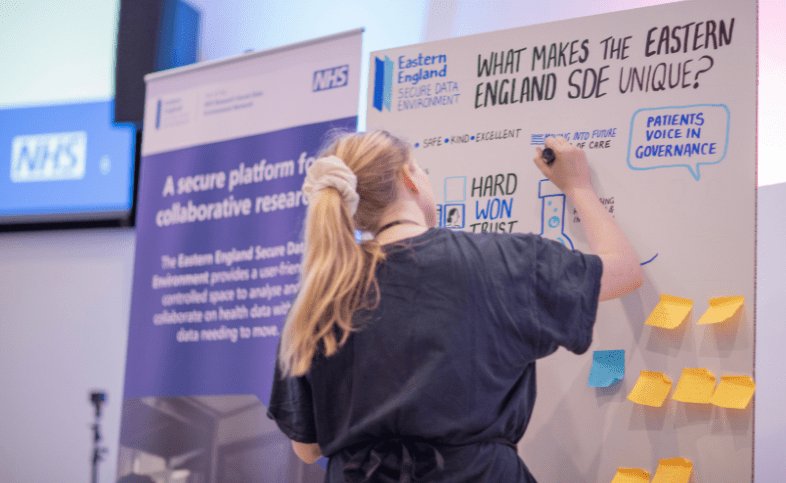

Health Innovation East’s health informatics team is working with the research and development team at Cambridge University Hospitals NHS Foundation Trust (CUH), and Cambridge University Health Partners (CUHP) to build this capability within the Eastern England SDE. Together, we are creating a test bed for the safe application of AI models to health data. The goal is to influence national standards and facilitate safe use of AI in health data.

Turning standards into practical solutions

Working in partnership with CUHP and CUH, the team has delivered VISTA (Viable Implementation for SATRE, SACRO and Tools for AI) – a project funded by UK Research and Innovation and supported by the DARE UK programme as part of its TREvolution initiative.

VISTA focused on three key areas:

- How research involving AI models can be safely supported within SDEs

- How semi-automation (using software tools to highlight risks combined with expert review and approval) can be used to support the checking of research outputs before they leave the SDE

- How these complex technical processes can be governed, trusted and clearly explained to the public.

Over 15 months ending in March 2026, the VISTA project tested emerging national standards designed to strengthen the safe use of data and AI within SDEs and TREs, while evaluating governance and public perceptions of the use of AI with health data. By implementing these standards within the Eastern England SDE, the team was able to evaluate how they work in real research settings and contribute learning to the wider UK research community.

One focus of the project was SATRE (Standardised Architecture for Trusted Research Environments), a best practice framework that is used to assess whether a TRE meets recognised standards for governance, security and data management.

The health informatics team helped establish a working group to adopt SATRE as a shared reference framework and align it with key UK assurance standards, including the Digital Economy Act 2017 and the international information security standard ISO27001. Achieving alignment with such standards will allow organisations involved in data research to reuse evidence across different assurance frameworks, reducing the administrative burden while increasing confidence in how sensitive health data is managed.

Improving how research outputs are checked

Another focus of the VISTA project was testing SACRO (Semi-Automated Checking of Research Outputs), a tool designed to support statistical disclosure control, which is the process used to ensure that research outputs cannot reveal sensitive information about individuals. This is especially important with sensitive health data.

Traditionally, reviewing research outputs such as tables, charts and analytical results has relied heavily on manual checks, which can be slow, laborious and can introduce risks caused by human error. SACRO enables a structured and consistent approach by identifying potential disclosure risks automatically and highlighting outputs that require closer review by a human.

This allows airlock managers (data specialists responsible for reviewing research outputs before they leave the secure environment) to prioritise their work and focus attention where it is most needed.

For the Eastern England SDE, this approach has already improved efficiency. Many low-risk outputs can now be reviewed within one to two working days, compared with three to five days when checks are conducted entirely manually.

Importantly, SACRO supports human oversight rather than replacing it. Airlock managers retain the final responsibility for deciding whether outputs can safely leave the secure environment.

Testing how AI models can be governed safely

The VISTA project also explored how Secure Data Environments can support the safe development of machine learning models through SACRO-ML, a toolkit designed to assess whether an AI model could reveal information about individuals and if it is safe to release.

To test this approach, the team ran a demonstrator project using synthetic data (artificially generated information) to train a machine learning model designed to predict the likelihood of a person’s diabetes status based on their healthcare history.

This allowed researchers to explore how machine learning models could move through the full SDE workflow, from project feasibility and risk assessment to model training and output checking.

Alongside this, the team developed a risk assessment toolkit for the Eastern England SDE. This provides a structured process for evaluating machine learning projects and identifying appropriate safeguards before machine learning models are released.

Building public trust in health data for research

Strong governance is central to how the Eastern England SDE operates. Researchers cannot download underlying data from the environment, and all research activity is monitored within the secure platform. The SDE holds ISO 27001 certification and undergoes regular testing to maintain high standards of information security.

Public involvement played an important role in shaping the development of the SDE. Members of the SDE’s Core Public Advisory Group contributed to discussions about AI governance, transparency and disclosure risk, helping ensure that complex technical processes are explained clearly and aligned with public expectations. The project’s patient involvement also demonstrated that complex topics need clear and transparent explanations for the public, even if work is happening behind the scenes.

Roseanna Fenessy, patient representative, said:

“Knowing that my data could help find better treatments for others is something I feel proud of. What matters to me is that it is used carefully, with proper oversight, and that patients have a real say in how it is used. The Eastern England SDE gives me confidence that those standards are being taken seriously.”

Enabling the future of AI-driven health research

As governments, regulators and healthcare systems look to harness the potential of AI, there is growing recognition that AI-led innovation must be supported by strong governance and public trust. SDEs and TREs are becoming a critical part of this infrastructure.

Through the VISTA project, the health informatics team is demonstrating how this can work in practice by providing a secure test bed where AI models can be developed, evaluated and governed using real-world health data. By helping shape emerging standards, testing new approaches and embedding public involvement in AI governance, we are helping to define how AI can be used safely across the UK health data ecosystem.

Stronger operational assurance and scalable governance

With the VISTA project complete, the practical impact is stronger operational assurance and more scalable governance.

- SATRE helps organise evidence of standards used across technical, operational and governance teams to support targeted improvements, for example, clearer documentation, better reporting and training needs analysis.

- SACRO improves reviews while preserving human accountability: Eastern England SDE reviewers observed that many low-risk outputs can be approved in 1–2 working days – versus a typical 3–5 days for fully manual review, with clearer audit trails and more consistent application of thresholds.

- For machine learning, SACRO-ML and the associated toolkit provide a repeatable way to assess whether a trained model could leak sensitive information, enabling the Eastern England SDE to support a broader range of AI research with controls that can be evidenced and justified

For further information and links to the published VISTA project outputs, read the final VISTA report.

You might also like…

Over the past three years, Health Innovation East has helped build a secure data infrastructure through the East of England Secure Data Environment (SDE). In February 2026, a major milestone was reached as the East of England and East Midlands SDEs merged to form the Eastern England Secure Data Environment.

Read here

Share your idea

Do you have a great idea that could deliver meaningful change in the real world?

Get involved